Interesting new paper out: PLOS Computational Biology: Bayesian Reconstruction of Disease Outbreaks by Combining Epidemiologic and Genomic Data.

Full Citation: Jombart T, Cori A, Didelot X, Cauchemez S, Fraser C, et al. (2014) Bayesian Reconstruction of Disease Outbreaks by Combining Epidemiologic and Genomic Data. PLoS Comput Biol 10(1): e1003457. doi:10.1371/journal.pcbi.1003457

Abstract:

Recent years have seen progress in the development of statistically rigorous frameworks to infer outbreak transmission trees (“who infected whom”) from epidemiological and genetic data. Making use of pathogen genome sequences in such analyses remains a challenge, however, with a variety of heuristic approaches having been explored to date. We introduce a statistical method exploiting both pathogen sequences and collection dates to unravel the dynamics of densely sampled outbreaks. Our approach identifies likely transmission events and infers dates of infections, unobserved cases and separate introductions of the disease. It also proves useful for inferring numbers of secondary infections and identifying heterogeneous infectivity and super-spreaders. After testing our approach using simulations, we illustrate the method with the analysis of the beginning of the 2003 Singaporean outbreak of Severe Acute Respiratory Syndrome (SARS), providing new insights into the early stage of this epidemic. Our approach is the first tool for disease outbreak reconstruction from genetic data widely available as free software, the R package outbreaker. It is applicable to various densely sampled epidemics, and improves previous approaches by detecting unobserved and imported cases, as well as allowing multiple introductions of the pathogen. Because of its generality, we believe this method will become a tool of choice for the analysis of densely sampled disease outbreaks, and will form a rigorous framework for subsequent methodological developments.

Check out the nice figure on a SARS outbreak:

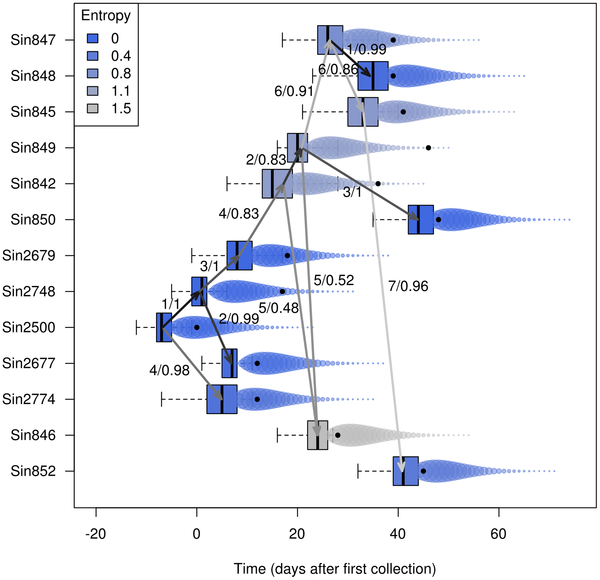

|

| Figure 5. Results of the analysis of the SARS data using outbreaker. This figure summarizes the reconstruction of the outbreak, showing putative transmissions (arrows) amongst individuals (rows). Arrows represent ancestries with a least 5% of support in the posterior distributions, while boxes correspond to the posterior distributions of the infection dates. Arrows are annotated by number of mutations and posterior support of the ancestries, and colored by numbers of mutations, with lighter shades of grey for larger genetic distances. The actual sequence collection dates are plotted as plain black dots. Bubbles are used to represent the generation time distribution, with larger disks used for greater infectivity. Shades of blue indicate the degree of certainty for inferring the origin of different cases, as measured by the entropy of ancestries (see methods and equation 12): blue represents conclusive identification of the ancestor of the case (low entropy), while grey shades are uncertain (high entropy). |

And then the consensus transmission tree

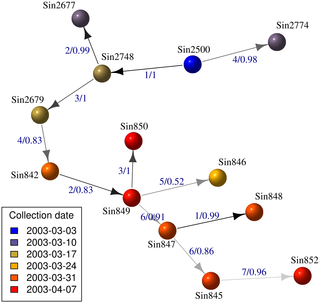

|

| Figure 6. Consensus transmission tree reconstruction of the SARS outbreak. This figure indicates the most supported transmission tree reconstructed by outbreaker. Cases are represented by spheres colored according to their collection dates. Edges are colored according to the corresponding numbers of mutations, with lighter shades of grey for larger numbers. Edge annotations indicate numbers of mutations and frequencies of the ancestries in the posterior samples. |

Outbreaker is available here: http://cran.r-project.org/web/packages/outbreaker/index.html

I also like the 1st line of their Acknowledgements:

We are thankful to Sourceforge (http://sourceforge.net/) and CRAN (http://cran.r-project.org/) for providing great resources for developing and hosting outbreaker.

Definitely worth checking out.